Buffer Engineering Report

December 2016

Requests for buffer.com

189 m -7.8%

Avg. response for buffer.com

254 ms -4.2%

Requests for api.bufferapp.com

288 m -33.9%*

Avg. response for api.bufferapp.com

269 ms -33.9%

Code reviews given

51% of pull requests -12%

Buffer Kubernetes Cluster

- 9 nodes in cluster

- 149 pods

- 652million requests handled

- Serving 58% of total traffic

Bugs and Quality

- 3 S1 (severity 1) bugs: 13 opened, 10 closed. (77% smashed, 8% down from November)

- 8 S2 (severity 2) bugs: 25 opened, 17 closed (68% smashed, 2% up from November)

Quality focus: Reducing updates in error state

Have you ever had a Buffer update that failed to post to a network? We hope not! But, we know these happen.

José, Dan and Steven have been focused on a major project to reduce the number of social media updates that get stuck in an error state and as a result fail to post.

The first stage of this project was completed in December: a real-time dashboard in Datadog that we can use to track down these errors. With increased visibility of what’s going wrong, José will be able to implement a goal percentage of error-free posts, so that we have a clearly defined target for successful updates, and monitor in real-time how we’re doing.

We’re so excited to be reaching this new level of quality to make Buffer even more reliable for our users!

Experimenting with holiday deploy freezes

Over the holiday season, much of the team was out to enjoy some disconnected time with family and friends.

To balance delivering a great customer experience with our value of ‘work smarter, not harder,’ the engineering team instituted a “code freeze” over December 24 to January 03.

During this time, only critical bug fixes were shipped and engineers who were online held off on non-critical deploys. We hoped this would also reduce the risk of creating new bugs for customers that might mean more emails for our support team. This worked really well in reducing the risk of downtime or disruption over the holidays!

Users are testing our new composer now!

The rollout of our new Buffer composer, which will allow users to compose updates separately for each social network, now has 1,000 new users testing it out! The results? So far, so smooth.

We’re really proud of the milestone that Pioul and Emily have reached in getting this crucial new feature to the next stage!

Brand new Twitter analytics coming soon to Buffer for Business

Alex and Tigran have been hard at work on the new analytics for Twitter that we’ll soon be sharing with all our Buffer for Business customers and trialists!

The raw data is far more accurate thanks to Gnip, and Alex has built out a super slick and snappy experience with React + Redux, creatively using iframes to drop the new stack into our older backbone dashboard shell.

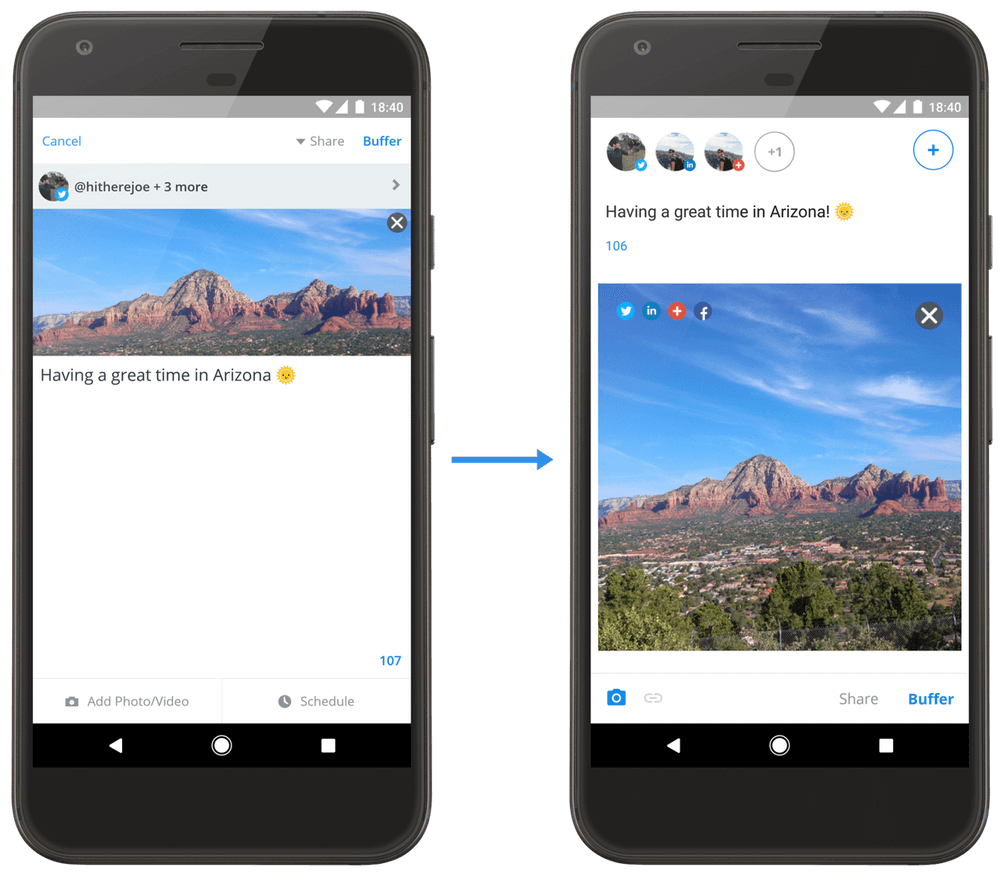

Sharing our Android journey with the world

The new Android composer released in November was rolled out further last month and Joe wrote up a great post about the Android team’s Journey from Legacy Code to Clean Architecture.

Android has had many owners at Buffer, from Sunil (who started at Buffer as an Android contractor!) to Marcus and Joe today. It’s been so inspiring seeing how the team has tackled that debt!

Both Marcus and Joe spoke about the Buffer Android journey at Londroid (the Android Meetup in London) and shared how they went about refactoring the composer. You can find the video recording and slides online. We’re so happy they could share their learnings with the Android community!

The Android team also open sourced this handy text input component! With more than 500 stars now, we’re really excited that Joe was able to share something that’s proved so useful to the community.

Similar to what Andy’s done on iOS, Marcus worked on rebuilding the settings view together with the new FCM Push Notification System from Google, which will help us and our users send and receive push notifications more reliably.

The big technical and design update to the Android app is also coming along nicely and the team will share more about details and when its going to be released in the next weeks — stay tuned.

iOS: Easier path to upgrades and downgrades

With the iOS App Store shut down for updates and Jordan out on paternity leave, December was a great few weeks to get some housekeeping done throughout the Buffer iOS app.

Andy completely refactored the settings view, which has been needing some improvements for quite some time. It’s now easy for us to add and remove sections based on device, account, plans and permissions. (For example, we can now hide the Touch ID option from non-Touch ID enabled devices.)

We also got all of our in-app subscriptions in a single group, which allows us to give users easier upgrade and downgrade paths between plans (since subscription features were introduced in June, our plans were separate.) This helps us prevent users from accidentally having two plans.

Meanwhile, Andy continued to iterate on a couple of concepts to improve Instagram Reminders. We’ve had a few features out in beta and have been collecting feedback from our testers on whether they are useful.

The v6.2 update to the app is coming along nicely, with many bug fixes and new features. We’ve also added acknowledgements to all of the open source projects we make use of in the app to give back to all the folks who make things a little easier for us and others.

Profile access token sharing service: Will it be Node.js or Golang?

As we work on breaking up our monolithic web app and moving to microservices, another core piece has fallen into place with the new profile access token service built out by Harrison.

This service provides the communication layer between various other microservices that need to get a social network’s access token so that it can interact with that network’s API. This core service was built out using Vault for encryption and key management to ensure that there’s never any unencrypted tokens floating around.

We’re experimenting with whether Node.js or Golang will ultimately have the best performance, with a first iteration serving up tokens built in Node, and a Go implementation to follow soon. The goal is to benchmark performance differences and learn earlier in our journey which language best suits this type of task, so we can apply that learning to future services. Bets on what’s faster are welcome! ?

A Kubernetes migration (and return)

This month our systems team created a new Kubernetes 1.4.4 cluster to transition from our 1.3.0 cluster. We were hoping to take advantage of some new features and to get in the rhythm to try to to keep pace with Kubernetes minor releases. We were excited to have a high availability master for our cluster and start experimenting with ScheduledJobs (now called CronJobs in 1.5).

With our transition, we found an overall performance degradation across the board along with high resource consumption. Through much evaluation and experimentation, we decided to migrate our services back to our original 1.3 cluster for more predictability and reliability. We’ve put a pause on migration efforts for now and hope to spend time in January to experiment more, likely with a 1.5.x cluster and leave more time for testing.

Wrapping up 2016 with an engineering all hands

We wrapped up our engineering team year with all hands meeting before many of us went on vacations – here you can see a fun snap of many Buffer devs!

Many of us were out over the holiday season for some vacation and disconnected time with friends and family, and we’re so happy that a smaller report this month reflects our teammates having that vital recharge time.

Over to you!

What would you like to learn more about? Anything we could share more of? We’d love to hear from you in the comments!

Check out more reports from December 2016:

Try Buffer for free

200,000+ creators, small businesses, and marketers use Buffer to grow their audiences every month.