Buffer Engineering Report

November 2016

Requests for buffer.com

203 m -1.9%

Avg. response for buffer.com

265 ms -5.4%

Requests for api.bufferapp.com

430 m -59.8%*

Avg. response for api.bufferapp.com

196 ms +100%

Code reviews

given

63% of pull requests -3%

*We offloaded nearly 60% of requests to api.bufferapp.com onto the Kubernetes cluster with the new links service! The links service was heavily cached, which caused average response from api.bufferapp.com to appear artificially low.

Buffer Kubernetes Cluster

- 9 nodes in cluster

- 124 pods

- 660million requests handled

- Serving 51% of total traffic

Bugs & Quality

- 3 S1 (severity 1) bugs: 20 opened, 17 closed. (85% smashed, 1% up from than October)

- 11 S2 (severity 2) bugs: 33 opened, 22 closed (66% smashed, 2% down from than October)

Latest Engineering productivity hack: Deep Work Wednesdays

We’ve had our first full month in November of Deep Work Wednesdays: no meetings and minimal Slack use for our engineering and product team.

Our Data team experimented with similar version of this idea with their two-day Hackday event and that inspired our team to come up with a way to get some intensive work done throughout the quarter.

It’s been really refreshing and energizing to have a mid-week day of calm zen focus where we can put our heads down.

This is the first major step we’ve taken experimenting actively with ways balance focus with collaboration and staying a tight-knit team.

There were a few interesting side effects! Having a day with most engineers off Slack meant we had to become more disciplined with our weekday On-Call schedule for emergency responses, as we couldn’t rely on folks being around Slack all day to spot trouble. Steven has gotten the team into a much clearer schedule of key people set up to receive and respond to emergency situations.

Another interesting effect was that our product managers have been keen on joining in and teammates from all different walks of Buffer, from Happiness to Design, have been really interested in our little experiment and might try it out themselves! Perhaps this is a simple, scalable experiment you can try on your team, too?

Let’s make it snappy: Focusing on web performance

Federico has been leading a focus on performance in the Buffer dashboard to help ensure all actions in the dashboard are nice and snappy.

These efforts are driven by the Task Performance Indicator framework which keeps the focus on the tasks our users are trying to do, and the performance of the code that executes these tasks. This way, Federico is able to make the highest-impact optimizations for our customers!

Mike also been exploring service workers to help speed up our regularly used static content. There are still some challenges around trying to keep static files fresh when we deploy multiple times a day.

We’ve explore some strategies, but haven’t quite yet found a great solution. If you know of any great solutions to have a minimal user experience impact, it’d be amazing if you shared in the comments!

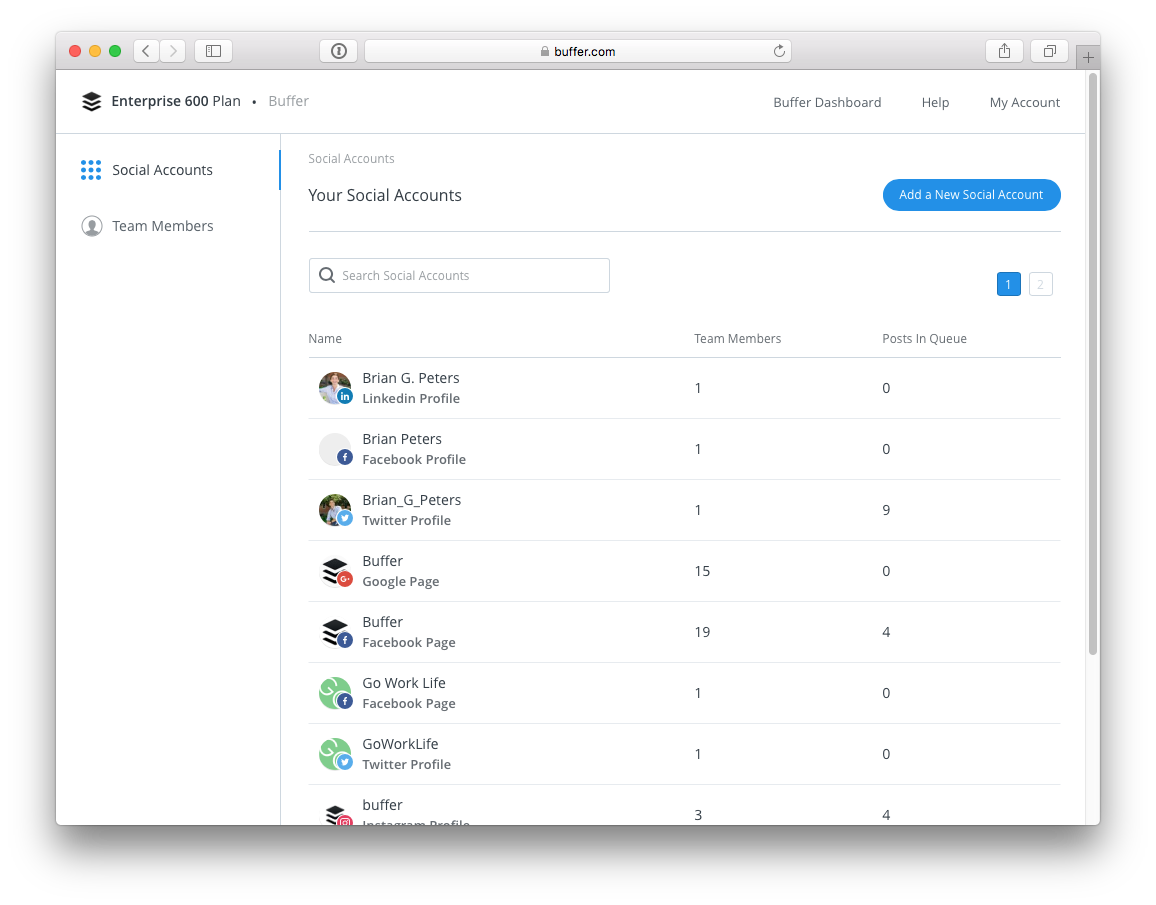

Business customers: Buffer’s new way to manage your team!

We’re excited and proud that Hamish has rolled out the Organizational Admin, a new way for you to manage your Buffer for Business social media profiles and team mates, to 100 percent of our users!

Look out for the prompts to set up your team as an organization – it’s a much smoother flow that we hope will make life a lot easier for all our Buffer for Business customers.

The rollout itself has been one of the smoothest to date.

Well done, Hamish, for your excellent stewardship of the project, and to fellow crafters Alex, Dan and José, and Colin, for organizing and leading our first quality assurance “Stop and Hammer” drill.

During a period of several days, Colin spearheaded a serious quality assurance push in which he, Tom, myself and any others on the team jumped in to try actively break the Organizations Admin. We were glad to kick up a few bugs and interesting scenarios before our users did! That drill was really key in making sure the final version was rock solid and the roll out the smoothest one we’ve had yet.

A brand new Android composer

Available now to all Android users, a brand-new composer!

The architecture was designed by Joe using the MVP framework with RXJava, and there are a lot of user interface and user flow improvements that make sharing on your Android device easier and faster!

Marcus and Joe have released this big refactor of the Android Buffer update composer into the wild!

A lot of hard work and thorough testing went into this major from-the-ground-up rewrite of the Android composer.

A GIF of our old composer is up above, and below you can see the new version. Hope you enjoy it!

Coming up very soon are in-app purchases for Android, which is now in the testing phase – we’re really excited to allow folks to switch plans and upgrade right within the app! Joe and Marcus are also working on some super slick new UI improvements for the big version release of v.6.0.0, coming up in Google Play soon!

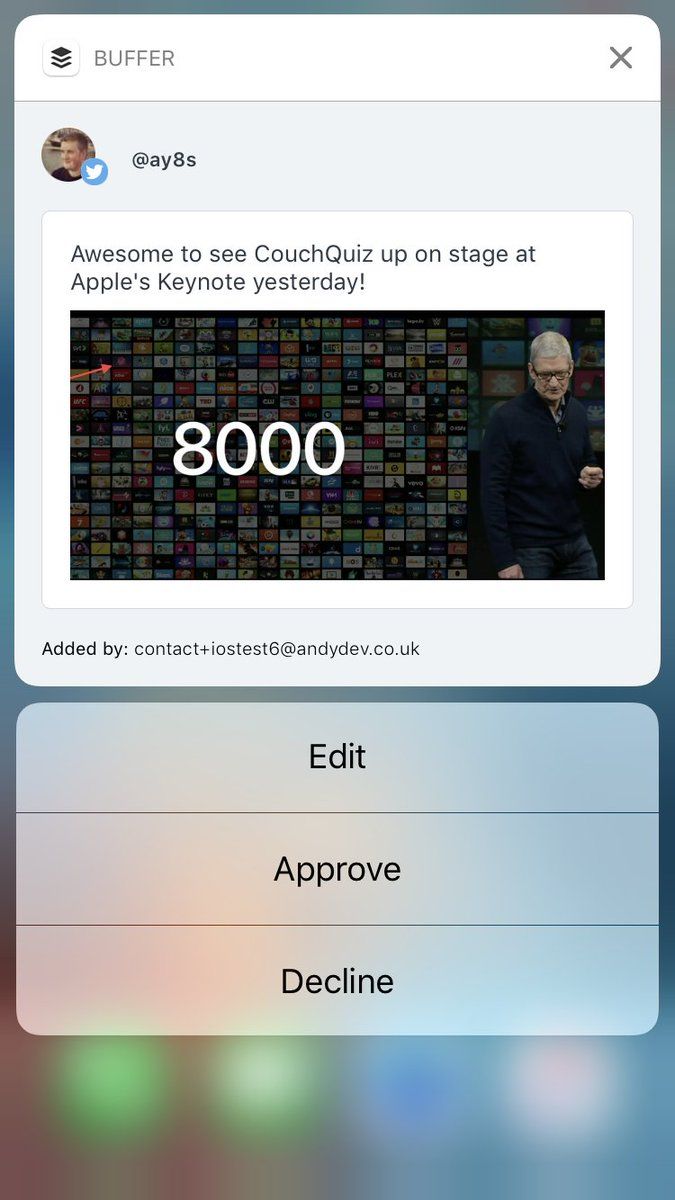

iOS updates: now with 3D Touch notifications

This week, Andy will be heading to AsyncDisplayKit’s 2.0 launch event at Pinterest.

We’re super interested to try the new Declarative API for Tables and Collections, which will allow us to remove a bunch of code throughout the app. We switched our image library code for Pinterest’s PINRemoteImage as it’s used throughout AsyncDisplayKit, so it made sense to make use of the single dependency it also allows us to improve Instagram Reposting speed using prefetching of images.

Contribution Notifications are now available on iOS! If you have a 3D Touch-enabled device, you can now use 3D Touch notifications to view a preview along with actions to Approve, Dismiss and Edit.

We’ve refactored the Settings view to make adding and removing options for specific profiles easier in future. For example, we’re currently showing Touch ID for all devices even those without Touch ID capabilities.

Continuing on our path to 99.9% crash–free, the current iOS app version is at 99.85%. We also released a sticker pack within Buffer which contains our Buffer Values as stickers. More stickers coming soon!

Speaking at KubeCon on our transition to microservices

Dan gave a great talk at KubeCon on How Kubernetes Was the Secret Sauce in Our Globally Distributed Team’s Transition to Microservices.

KubeCon is an incredible conference and we’re so excited that Dan flew the Buffer flag alongside technical leaders like Sam Ghods (cofounder of Box) and software architects from IMB, Comcast, Twitter, Microsoft, PayPal, Google.

He brought back a lot of great learnings to the team, from the big picture future vision (Kubernetes becoming the operating system for clusters!) to specifics like using Helm for package management and how to debug and find errors much faster by using Open Trace to trace the flow of a transaction across many services.

We’re all really proud of the work Dan as architect and our systems team Steven, Adnan and Eric have done to distinguish Buffer as an early adopter of Kubernetes for container orchestration.

You can read more about how we planned for and took the plunge with a big re-architecture here. This move to service oriented architecture will make development at Buffer faster and help Buffer run more reliably for our users than ever before.

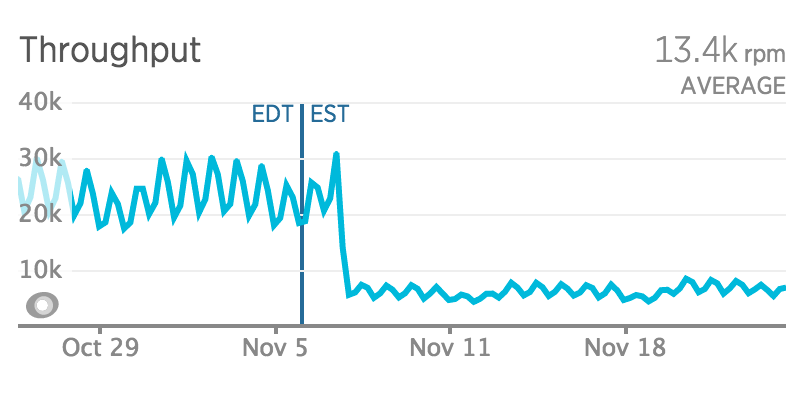

Speaking of speed… Buffer links is our first sub-millisecond service!

Harrison rebuilt our old Buffer links service, which tracks how many times a link has been shared and also powers our “Buffer buttons,” to be its own service running on Kubernetes. You can read all about the dragons slayed and lessons learned along the way here.

It was a big one to rebuild owing to the heavy load – in its first 15 hours of handling production traffic, it served 15.2 million requests!

With the links service offloading a ton of traffic onto our Kubernetes cluster, we’ve seen traffic served by the API down 56% in November:

Currently we have 8 containers handling this load from the links service with a sub-millisecond response times – this week’s average was a blazing 0.764 milliseconds. We hope you enjoy those super-fast shares!

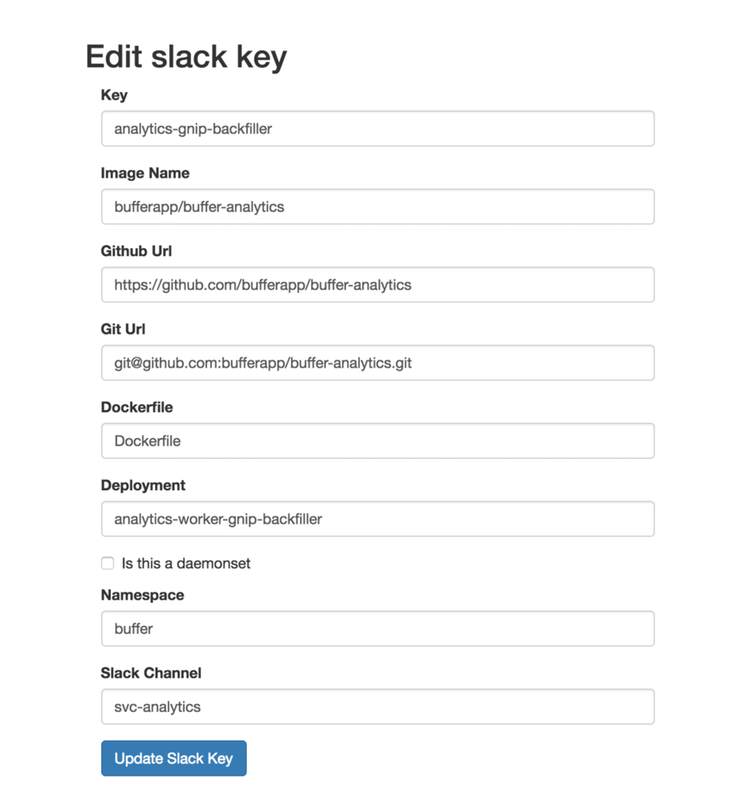

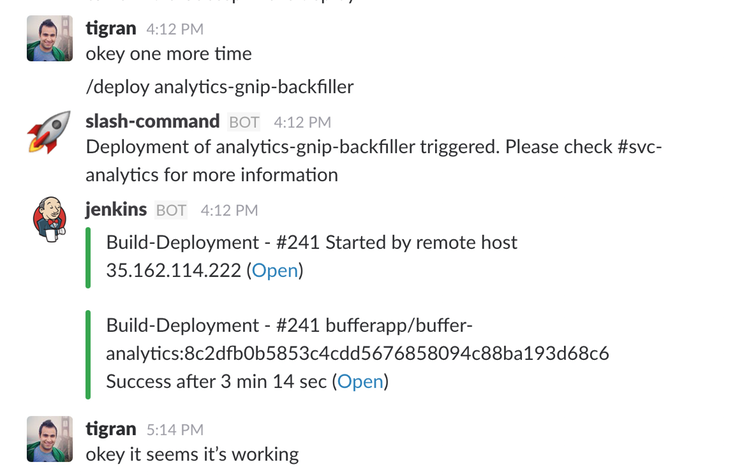

A Kubernetes dashboard for easier deployments

Adnan built a great dashboard, affectionately known as “Kuberdash,” which makes it really easy to deploy a new service with a slack bot!

Here’s an example of Tigran setting up a deployment for an analytics microservice – you create the deployment in the dashboard and then it’s really easy to deploy straight from Slack!

Steve is now our first dedicated UI Developer

Steve started out as a product designer for Buffer. He’s slowly transitioned to the engineering team, by taking on smaller tasks, working the marketing team and learning React in his spare time. Once we realized that Steve was on a fast track to becoming a full-fledged engineer, we knew we had to jump at the opportunity to use his design background for even more impact. Luckily Steve also had this exact idea in mind.

He’s now in charge of working with the design & engineering teams to make sure our design systems are easy to use and he makes sure that we deliver on a very high bar of product polish for our product.

Over to you

Is there anything you’d love to learn more about? Anything we could share more of? We’d love to hear from you in the comments!

Check out more reports from November 2016:

Try Buffer for free

200,000+ creators, small businesses, and marketers use Buffer to grow their audiences every month.